[3], a web-based vector compression technique, garnered significant attention at ICLR 2026. To me, it appeared strikingly similar: it shares substantial common ground with EDEN—a compression approach initially proposed as the 1-bit technique DRIVE at NeurIPS 2021 [1] and later extended to support any bit-width at ICML 2022 [2]. I co-authored EDEN alongside Ran Ben-Basat, Yaniv Ben-Itzhak, Gal Mendelson, Michael Mitzenmacher, and Shay Vargaftik.

The TurboQuant publication introduces two versions: TurboQuant-mse and TurboQuant-prod. In our recent thorough evaluation [5], we demonstrate that TurboQuant-mse is actually a simplified variant of EDEN, and that EDEN’s approaches consistently deliver superior performance.

How EDEN compresses a vector

Imagine you want to shrink a -dimensional vector (such as a gradient update, an embedding, or a KV-cache entry) to just a few bits per dimension. EDEN carries out this compression through four stages:

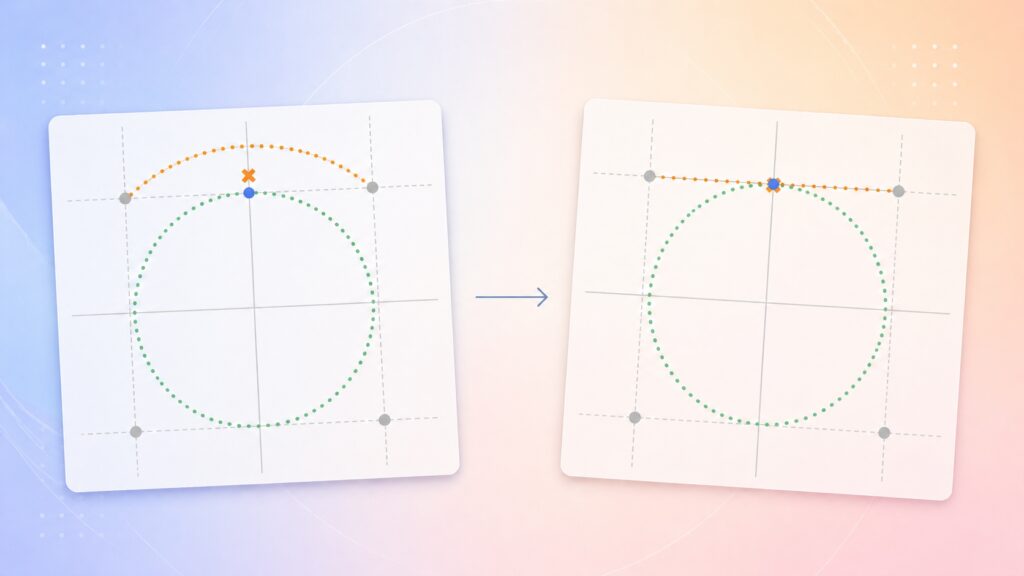

- Random rotation — Apply a random orthogonal transformation . Once rotated, the individual dimensions become statistically identical and, for high-dimensional vectors, closely resemble a Gaussian distribution.

- Scalar quantization — Map each rotated dimension to one of discrete levels from a Lloyd–Max codebook that was trained on the known distribution of the rotated coordinates ( indicates the desired number of bits per dimension).

- Scale — Adjust the result by multiplying with a scaling factor .

- Inverse rotation — Undo the rotation by applying , producing an approximation of the initial vector.

While prior research (for instance, Suresh et al., 2017 [6]) employed rotation primarily to narrow the dynamic range of coordinates (the spread between the largest and smallest values), EDEN [1] was—as far as we are aware—the first compression method to leverage a more powerful property of random rotation: the resulting coordinates follow a predictable distribution. This enables the use of a fixed quantizer together with a mathematically computed scale that can either minimize MSE or produce an unbiased estimate, depending on the task. Both scaling options are derived through mathematical analysis, and the method achieves an asymptotic improvement in MSE over earlier techniques.

Specifically, EDEN’s two versions differ solely in how they determine :

- EDEN-biased — Selects using the closed-form expression that yields the lowest reconstruction MSE.

- EDEN-unbiased — Picks so the reconstructed output is correct on average (), which is especially important when aggregating many compressed vectors (e.g., in distributed training or attention mechanisms).

When compared directly with EDEN, TurboQuant-mse aligns at every stage except one: where EDEN computes the scale mathematically, TurboQuant-mse—despite aiming to minimize MSE—omits this optimized scaling step.

The pseudocode below places all three methods side by side.

Why the optimal scale matters

The benefit of using the correct scale increases as the bit-width grows. At bit, the difference is negligible. However, at dimensions and bits, EDEN-biased achieves a 2.25% lower MSE than TurboQuant-mse—and these are the exact bit-widths commonly used in practice for embeddings and KV caches.

Across dimensions ranging from 16 to 4096 and all tested bit-widths , EDEN-biased vNMSE (vector-normalized MSE, ) remains below TurboQuant-mse’s in every scenario (Figure 2). As the dimensionality becomes very large, the optimal approaches 1 and the two algorithms converge,

However, at practical dimensions (ranging from 128 to 1024), this performance gap remains significant.

Unbiased compression: saving more than a full bit

The findings above focus on the biased (MSE-minimizing) variants. Now let’s examine the unbiased scenario, where tasks like distributed training, approximate attention, or inner-product retrieval require because they average many quantized vectors together.

EDEN-unbiased employs the same single-pass algorithm as EDEN-biased, with selected specifically for bias correction. TurboQuant’s unbiased counterpart, TurboQuant-prod, follows a different strategy: it allocates bits to the biased TurboQuant-mse step and sets aside 1 bit for a QJL (Quantized Johnson–Lindenstrauss) [4] correction applied to the residual (QJL behaves similarly to EDEN at , but introduces higher variance).

EDEN-unbiased surpasses TurboQuant-prod in every tested configuration, and by a considerable margin. This advantage stems from three structural strengths of EDEN’s single-pass approach:

- EDEN optimizes the scale factor. TurboQuant-prod inherits TurboQuant-mse’s first stage, meaning it carries the same MSE penalty.

- EDEN’s 1-bit construction produces lower variance than QJL. At large dimensions, EDEN’s 1-bit vNMSE converges to [1], whereas QJL’s converges to [4], roughly 2.75× higher.

- EDEN dedicates the entire bit budget to a single unbiased quantizer. TurboQuant-prod divides the budget into biased bits plus 1 residual bit, which empirically underperforms compared to allocating all bits to a single unbiased quantizer [5].

These benefits compound on each other. The outcome is clear: 1-bit, 2-bit, and 3-bit EDEN-unbiased each deliver higher accuracy than 2-bit, 3-bit, and 4-bit TurboQuant-prod, respectively (Figure 3). By switching to EDEN, you can reduce the bit count per coordinate by one while still matching TurboQuant-prod’s accuracy.

On TurboQuant’s own benchmarks

The same trend holds on the standard ANN benchmarks used in TurboQuant’s evaluation: Stanford’s GloVe pre-trained word vectors (Open Data Commons Public Domain Dedication and License v1.0) and Qdrant’s dbpedia-entities-openai3-text-embedding-3-large embeddings (Apache 2.0), assessed using TurboQuant’s published evaluation code:

EDEN-biased achieves lower MSE than TurboQuant-mse, EDEN-unbiased delivers significantly lower inner-product error than TurboQuant-prod, and nearest-neighbor recall on both datasets favors EDEN (Figure 4).

Takeaway: use EDEN; optimal scaling matters

EDEN’s scale factor bridges the known post-rotation distribution to an analytically optimal quantizer. TurboQuant-mse retains EDEN’s rotation and codebook but fixes , making it a strictly weaker special case. TurboQuant-prod adds a 1-bit QJL stage on top, while EDEN-unbiased achieves the same property with better

accuracy, by simply selecting a bias-correcting scale.

- For MSE-targeted compression (model weight quantization, nearest-neighbor search, KV cache): EDEN-biased calculates the optimal scale and consistently outperforms TurboQuant-mse (which is EDEN with fixed).

- For unbiased estimation (distributed mean estimation, approximate attention, inner-product retrieval): EDEN-unbiased significantly surpasses TurboQuant-prod’s bit-splitting approach, by margins exceeding a full bit per coordinate.

EDEN was initially created for distributed mean estimation in federated and distributed training. Later research has, for instance, applied it to embedding compression for document re-ranking (SDR, 2022 [8]), adapted it for NVFP4 LLM training (MS-EDEN in Quartet II, 2026 [10]), extended it to vector quantization for data-free LLM weight compression (HIGGS, 2025 [9]), which was subsequently used for KV-cache compression (AQUA-KV, 2025 [11]).

EDEN implementations are available in PyTorch and TensorFlow, in Intel’s OpenFL [7], and its 1-bit variant in Google’s FedJax, TensorFlow Federated, and TensorFlow Model Optimization.

For the complete technical comparison with TurboQuant (all figures, detailed experimental methodology), see our note [5].

For the original derivations, proofs, and additional extensions, see our original papers [1] [2].

References

- S. Vargaftik, R. Ben-Basat, A. Portnoy, G. Mendelson, Y. Ben-Itzhak, M. Mitzenmacher, DRIVE: One-bit Distributed Mean Estimation (2021), NeurIPS 2021.

- S. Vargaftik, R. Ben-Basat, A. Portnoy, G. Mendelson, Y. Ben-Itzhak, M. Mitzenmacher, EDEN: Communication-Efficient and Robust Distributed Mean Estimation for Federated Learning (2022), ICML 2022.

- A. Zandieh, M. Daliri, A. Hadian, V. Mirrokni, TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate (2026), ICLR 2026.

- A. Zandieh, M. Daliri, I. Han, QJL: 1-Bit Quantized JL Transform for KV Cache Quantization with Zero Overhead (2024), arXiv:2406.03482.

- R. Ben-Basat, Y. Ben-Itzhak, G. Mendelson, M. Mitzenmacher, A. Portnoy, S. Vargaftik, A Note on TurboQuant and the Earlier DRIVE/EDEN Line of Work (2026), arXiv:2604.18555.

- A. T. Suresh, F. X. Yu, S. Kumar, H. B. McMahan, Distributed Mean Estimation with Limited Communication (2017), ICML 2017.

- VMware Open Source Blog, VMware Research Group’s EDEN Becomes Part of OpenFL (November 2022).

- N. Cohen, A. Portnoy, B. Fetahu, A. Ingber, SDR: Efficient Neural Re-ranking using Succinct Document Representation (2022), ACL 2022.

- V. Malinovskii, A. Panferov, I. Ilin, H. Guo, P. Richtárik, D. Alistarh, HIGGS: Pushing the Limits of Large Language Model Quantization via the Linearity Theorem (2025), NAACL 2025.

- A. Panferov, E. Schultheis, S. Tabesh, D. Alistarh, Quartet II: Accurate LLM Pre-Training in NVFP4 by Improved Unbiased Gradient Estimation (2026), arXiv:2601.22813.

- A. Shutova, V. Malinovskii, V. Egiazarian, D. Kuznedelev, D. Mazur, N. Surkov, I. Ermakov, D. Alistarh, Cache Me If You Must: Adaptive Key-Value Quantization for Large Language Models (2025), ICML 2025.