What a two-person SRE group discovered constructing an AI investigation pipeline. Spoiler: the runbooks mattered greater than the mannequin.

Why we constructed this

At STCLab, our SRE group helps a number of Amazon EKS clusters working high-traffic manufacturing workloads. We’ve received the complete observability stack in place: OpenTelemetry feeding into Mimir, Loki, and Tempo. Robusta OSS enriches Prometheus alerts with error logs, Grafana hyperlinks, and group mentions earlier than dropping them into Slack.

So the info was by no means the issue. The issue was what occurred subsequent. Each alert meant the identical drill: verify the pod, question Prometheus, dig via Loki, pull traces, attempt to correlate. Fifteen to twenty minutes, each single time. We wished that first move to occur robotically and present up in the identical Slack thread.

HolmesGPT: Letting the LLM determine what to analyze

Alerts Pipeline

We went with HolmesGPT (CNCF Sandbox) due to the way it works: the ReAct sample. The LLM reads an alert, picks a device, reads the outcome, then decides what to verify subsequent. If a pod restarts, it would begin with the exit code, pull Loki logs throughout clusters via VPC peering, then have a look at CPU stress in Prometheus. The trail isn’t scripted it relies on what the mannequin really finds.

That issues in our case, as a result of not each namespace appears to be like the identical. Some have the complete image: centralized logs, distributed traces, the works. Multi-tenant workloads typically have none of that; for these namespaces, it’s kubectl and Prometheus solely. We seize these variations in markdown runbooks, every with a metadata header:

## Metascope: namespace=solely instruments: kubectl, prometheus, loki, tempowarning: some containers excluded from log assortment → use kubectl logs

Holmes calls fetch_runbook early in its investigation. The metadata tells it which instruments can be found and which of them to skip.

Making it work with Robusta

Our customized playbook is about 200 traces of Python. It covers what HolmesGPT doesn’t.

Robusta posts the alert to Slack earlier than Holmes is completed investigating, so our playbook has to seek out the appropriate thread after the very fact and publish outcomes as a reply. When Prometheus fires one alert per pod throughout a rollout, the playbook fingerprints on the workload degree and suppresses repeats for half-hour. And since Robusta routes to completely different Slack channels by namespace, the playbook replicates that mapping to seek out the place to publish.

Runbooks modified every thing

We began by specializing in mannequin choice. What really decided investigation high quality was the runbooks.

With out runbooks, the mannequin simply guesses. It’d verify Istio metrics in namespaces that haven’t any sidecars, or question Loki the place nothing is being collected. Finally it loops, burns via its step finances, and comes again with “I need more information.”

What fastened this wasn’t a greater mannequin. It was telling the mannequin what not to do. As soon as we added exclusion guidelines to our runbooks (“no Loki, no Tempo, no Istio here; use kubectl and PromQL only”), wasted device calls dropped from 16 to 2 per investigation.

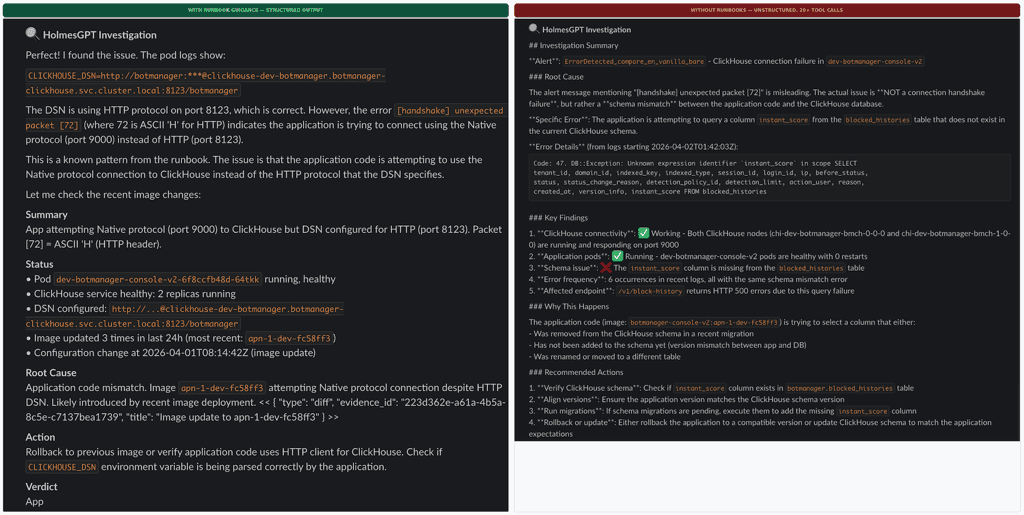

We ran a managed comparability to verify this: the identical ClickHouse handshake alert, examined 4 methods. With runbooks, Holmes matched the recognized error sample in 3 to 4 device calls and used the remainder of its finances to confirm. With out runbooks, it chased three totally completely different hypotheses (proxy scaling, schema mismatch, port misconfiguration) and burned via 20+ steps earlier than reaching a conclusion. Identical mannequin, similar alert. The runbook didn’t hand it the reply. It simply narrowed the search house sufficient {that a} 12-step finances was loads.

We now preserve seven runbooks, organized by namespace and alert kind. When an investigation comes again improper, the primary query we ask is “does the runbook cover this?” Not “do we need a better model?”

Identical alert, similar mannequin. Left: with runbook steerage. Proper: with out runbooks.

The mannequin journey

We examined seven fashions throughout self-hosted and managed internet hosting.

Self-hosted got here first, working on Spot GPUs managed by KubeAI (CNCF). The 7B mannequin couldn’t produce legitimate device calls. The 9B mannequin’s considering mode clashed with the ReAct loop and returned empty responses. A 14B appeared promising, however Spot evictions stored killing our runs, and chilly begins took 5 to eight minutes whereas Karpenter spun up nodes.

Then we tried managed APIs via VPC endpoints, which retains cluster information inside our infrastructure. Most fashions didn’t work; a number of choked on HolmesGPT’s immediate caching markers. Just one mannequin household handed every thing we would have liked: Korean output, Slack formatting, runbook retrieval, and cross-cluster log correlation. We additionally contributed a three-line upstream repair for pod identification authentication (PR #1850, merged).

As we speak we run a hybrid setup: self-hosted in staging, managed API in manufacturing. Switching between them is one YAML block:

modelList:

major:

mannequin: "provider/model-name" # swap supplier and mannequin ID

api_base: " # managed API or self-hosted

temperature: 0Price comes out to about $0.04 per investigation, roughly $12 a month. Pipeline, playbook, runbooks — all unchanged no matter backend.

What really mattered

Some numbers. Workload-level deduplication takes round 40 uncooked day by day alerts right down to about 12 distinctive investigations. Engineers learn a threaded abstract in below two minutes as a substitute of spending 15 to twenty on guide triage. Roughly 40% of investigations resolve on their very own: OOMKilled, ImagePullBackOff, and different recognized patterns the place Holmes matches a runbook and the basis trigger is clear.

Right here’s what we’d inform one other group beginning this.

Runbooks over fashions. We ran a managed take a look at the place the identical mannequin scored 4.6 out of 5 with runbooks and three.6 with out, on the very same alert. The exclusion guidelines we wrote into our runbooks moved the needle greater than any mannequin swap ever did.

Glue code is actual work. That 200-line playbook handles timing, dedup, routing, and thread matching. HolmesGPT handles reasoning. You want each.

Design for mannequin migration. We’ve swapped backends 3 times now with out touching the pipeline. The playbook is the secure core. The mannequin is the half you substitute.

What’s subsequent: we’re Inspektor Gadget (CNCF) to feed eBPF-level community metrics — TCP retransmits, connection latency — into the identical pipeline via Prometheus. The structure stays the identical. Holmes simply will get higher information to work with.

About STCLab: STCLab builds high-performance on-line site visitors administration software program. NetFUNNEL (Digital Ready Room) and BotManager (bot mitigation) serve hundreds of thousands of concurrent customers throughout 200 nations.

Questions? grace@stclab.com | stclab.com