Picture by Writer

# Introduction

On daily basis, customer support facilities report 1000’s of conversations. Hidden in these audio information are goldmines of data. Are clients glad? What issues do they point out most frequently? How do feelings shift throughout a name?

Manually analyzing these recordings is difficult. Nevertheless, with fashionable synthetic intelligence (AI), we are able to routinely transcribe calls, detect feelings, and extract recurring matters — all offline and with open-source instruments.

On this article, I’ll stroll you thru an entire buyer sentiment analyzer challenge. You’ll discover ways to:

- Transcribing audio information to textual content utilizing Whisper

- Detecting sentiment (constructive, unfavourable, impartial) and feelings (frustration, satisfaction, urgency)

- Extracting matters routinely utilizing BERTopic

- Displaying ends in an interactive dashboard

The very best half is that every part runs regionally. Your delicate buyer knowledge by no means leaves your machine.

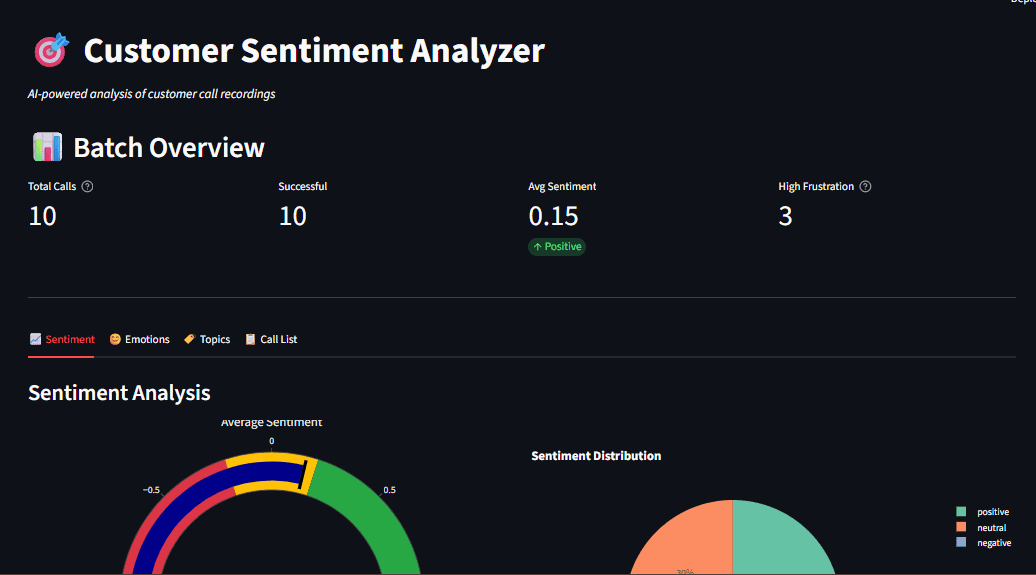

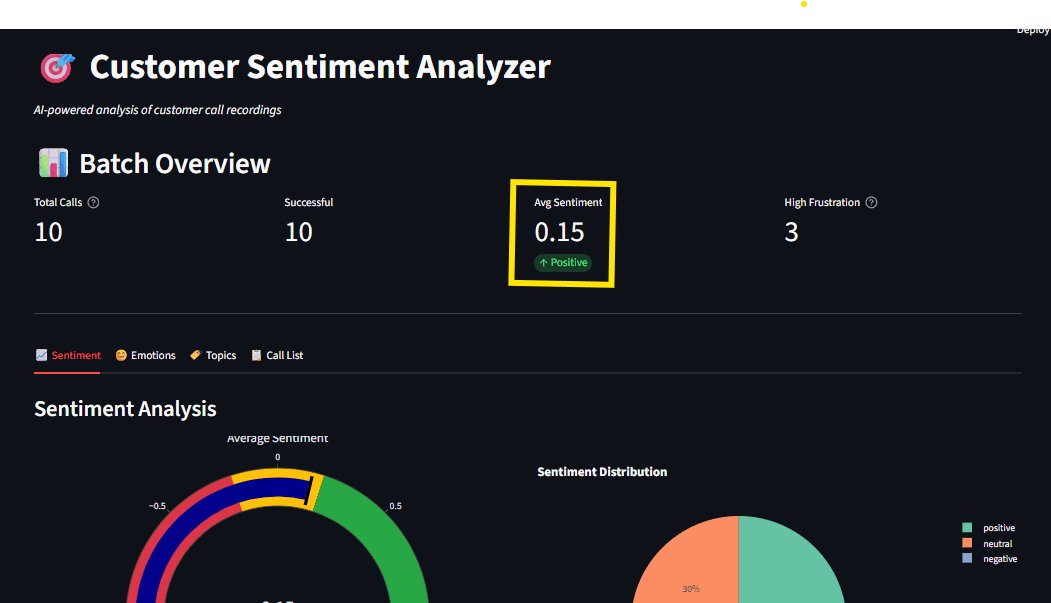

Fig 1: Dashboard overview displaying sentiment gauge, emotion radar, and subject distribution

# Understanding Why Native AI Issues for Buyer Information

Cloud-based AI companies like OpenAI’s API are highly effective, however they arrive with considerations akin to privateness points, the place buyer calls usually include private info; excessive price, the place you pay per-API-call pricing, which provides up rapidly for top volumes; and dependency on web fee limits. By working regionally, it’s simpler to satisfy knowledge residency necessities.

This native AI speech-to-text tutorial retains every part in your {hardware}. Fashions obtain as soon as and run offline eternally.

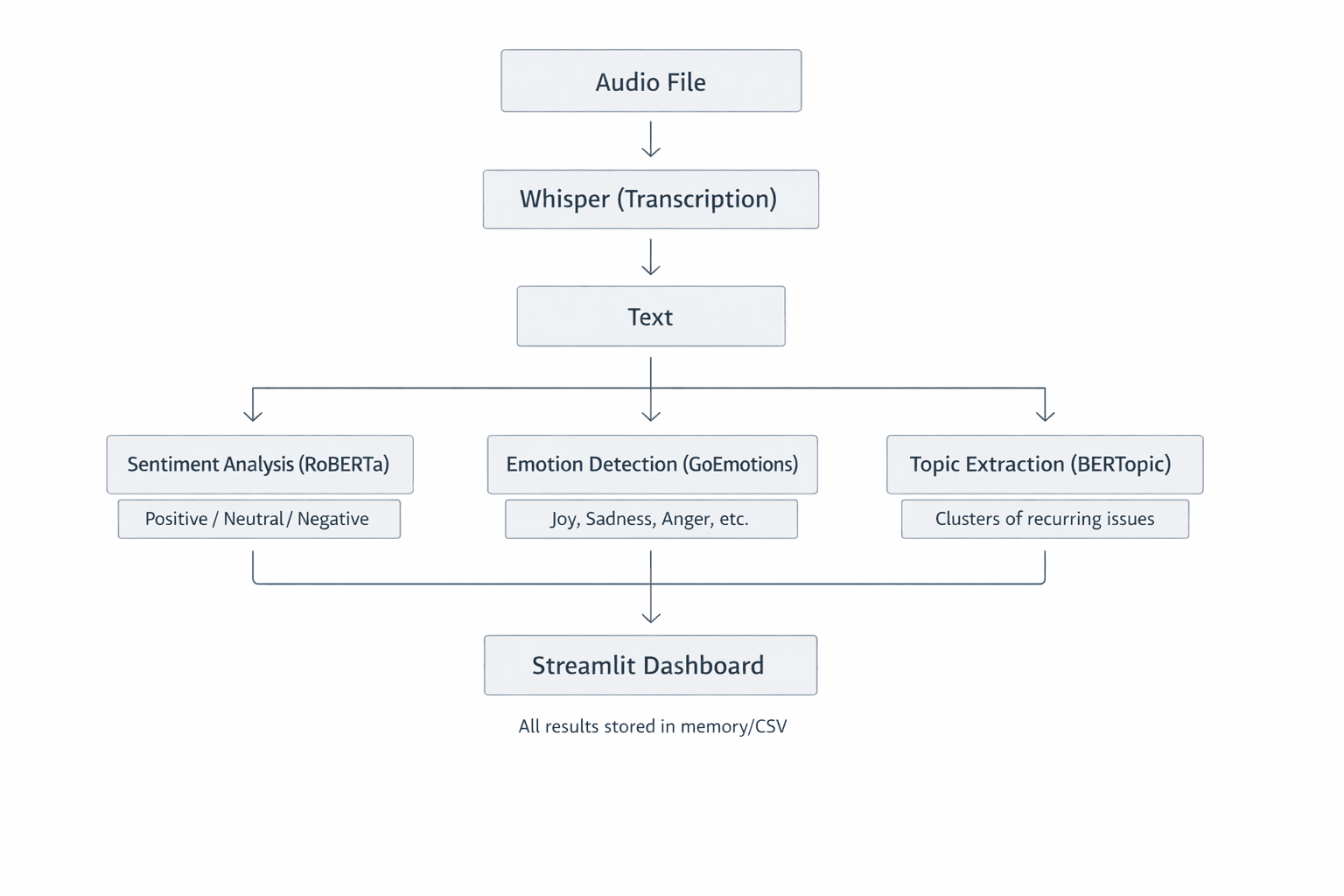

Fig 2: System Structure Overview displaying how every part handles one job properly. This modular design makes the system straightforward to know, take a look at, and lengthen

// Stipulations

Earlier than beginning, be sure you have the next:

- Python 3.9+ is put in in your machine.

- You must have FFmpeg put in for audio processing.

- You must have fundamental familiarity with Python and machine studying ideas.

- You want about 2GB of disk area for AI fashions.

// Setting Up Your Venture

Clone the repository and arrange your setting:

git clone

Create a digital setting:

Activate (Home windows):

Activate (Mac/Linux):

Set up dependencies:

pip set up -r necessities.txt

The primary run downloads AI fashions (~1.5GB whole). After that, every part works offline.

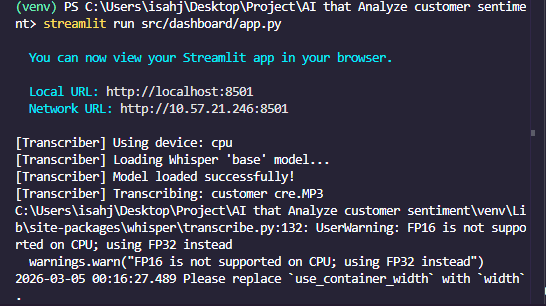

Fig 3: Terminal displaying profitable set up

# Transcribing Audio with Whisper

Within the buyer sentiment analyzer, step one is to show spoken phrases from name recordings into textual content. That is accomplished by Whisper, an automated speech recognition (ASR) system developed by OpenAI. Let’s look into the way it works, why it is a terrific selection, and the way we use it within the challenge.

Whisper is a Transformer-based encoder-decoder mannequin educated on 680,000 hours of multilingual audio. If you feed it an audio file, it:

- Resamples the audio to 16kHz mono

- Generates a mel spectrogram — a visible illustration of frequencies over time — which serves as a photograph of the sound

- Splits the spectrogram into 30-second home windows

- Passes every window via an encoder that creates hidden representations

- Interprets these representations into textual content tokens, one phrase (or sub-word) at a time

Consider the mel spectrogram as how machines “see” sound. The x-axis represents time, the y-axis represents frequency, and colour depth exhibits quantity. The result’s a extremely correct transcript, even with background noise or accents.

Code Implementation

Here is the core transcription logic:

import whisper

class AudioTranscriber:

def __init__(self, model_size="base"):

self.mannequin = whisper.load_model(model_size)

def transcribe_audio(self, audio_path):

end result = self.mannequin.transcribe(

str(audio_path),

word_timestamps=True,

condition_on_previous_text=True

)

return {

"text": end result["text"],

"segments": end result["segments"],

"language": end result["language"]

}

The model_size parameter controls accuracy vs. velocity.

| Mannequin | Parameters | Velocity | Finest For |

|---|---|---|---|

| tiny | 39M | Quickest | Fast testing |

| base | 74M | Quick | Improvement |

| small | 244M | Medium | Manufacturing |

| giant | 1550M | Gradual | Most accuracy |

For many use instances, base or small presents the most effective stability.

Fig 4: Transcription output displaying timestamped segments

# Analyzing Sentiment with Transformers

With textual content extracted, we analyze sentiment utilizing Hugging Face Transformers. We use CardiffNLP’s RoBERTa mannequin, educated on social media textual content, which is ideal for conversational buyer calls.

// Evaluating Sentiment and Emotion

Sentiment evaluation classifies textual content as constructive, impartial, or unfavourable. We use a fine-tuned RoBERTa mannequin as a result of it understands context higher than easy key phrase matching.

The transcript is tokenized and handed via a Transformer. The ultimate layer makes use of a softmax activation, which outputs possibilities that sum to 1. For instance, if constructive is 0.85, impartial is 0.10, and unfavourable is 0.05, then total sentiment is constructive.

- Sentiment: Total polarity (constructive, unfavourable, or impartial) answering the query: “Is this good or bad?”

- Emotion: Particular emotions (anger, pleasure, concern) answering the query: “What exactly are they feeling?”

We detect each for full perception.

// Code Implementation for Sentiment Evaluation

from transformers import AutoModelForSequenceClassification, AutoTokenizer

import torch.nn.useful as F

class SentimentAnalyzer:

def __init__(self):

model_name = "cardiffnlp/twitter-roberta-base-sentiment-latest"

self.tokenizer = AutoTokenizer.from_pretrained(model_name)

self.mannequin = AutoModelForSequenceClassification.from_pretrained(model_name)

def analyze(self, textual content):

inputs = self.tokenizer(textual content, return_tensors="pt", truncation=True)

outputs = self.mannequin(**inputs)

possibilities = F.softmax(outputs.logits, dim=1)

labels = ["negative", "neutral", "positive"]

scores = {label: float(prob) for label, prob in zip(labels, possibilities[0])}

return {

"label": max(scores, key=scores.get),

"scores": scores,

"compound": scores["positive"] - scores["negative"]

}

The compound rating ranges from -1 (very unfavourable) to +1 (very constructive), making it straightforward to trace sentiment traits over time.

// Why Keep away from Easy Lexicon Strategies?

Conventional approaches like VADER depend constructive and unfavourable phrases. Nevertheless, they usually miss context:

- “This is not good.” Lexicon sees “good” as constructive.

- A transformer understands negation (“not”) as unfavourable.

Transformers perceive relationships between phrases, making them way more correct for real-world textual content.

# Extracting Matters with BERTopic

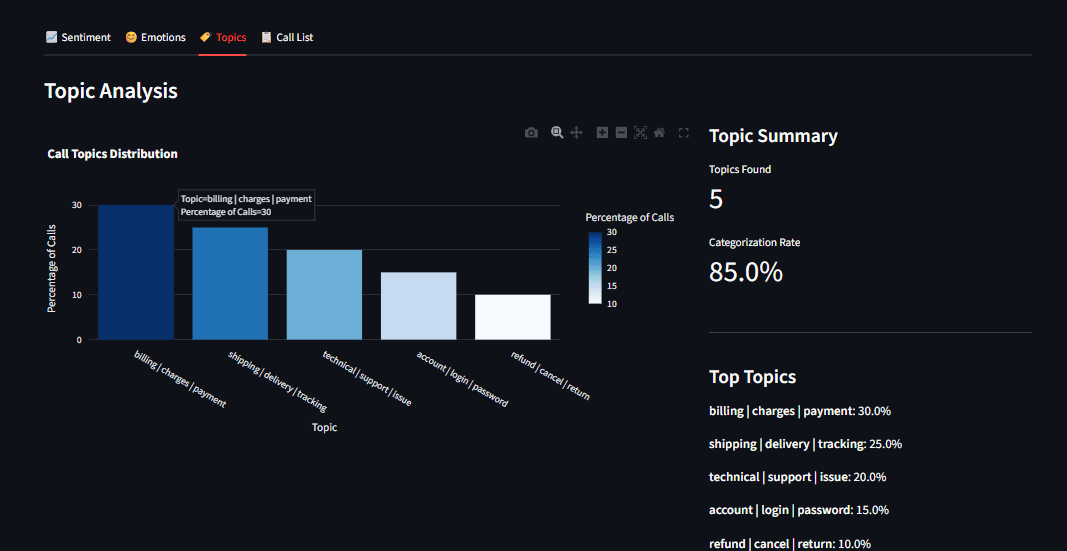

Realizing sentiment is beneficial, however what are clients speaking about? BERTopic routinely discovers themes in textual content with out you having to pre-define them.

// How BERTopic Works

- Embeddings: Convert every transcript right into a vector utilizing Sentence Transformers

- Dimensional Discount: UMAP compresses these vectors right into a low-dimensional area

- Clustering: HDBSCAN teams comparable transcripts collectively

- Matter Illustration: For every cluster, extract essentially the most related phrases utilizing c-TF-IDF

The result’s a set of matters like “billing issues,” “technical support,” or “product feedback.” Not like older strategies like Latent Dirichlet Allocation (LDA), BERTopic understands semantic that means. “Shipping delay” and “late delivery” cluster collectively as a result of they share the identical that means.

Code Implementation

From matters.py:

from bertopic import BERTopic

class TopicExtractor:

def __init__(self):

self.mannequin = BERTopic(

embedding_model="all-MiniLM-L6-v2",

min_topic_size=2,

verbose=True

)

def extract_topics(self, paperwork):

matters, possibilities = self.mannequin.fit_transform(paperwork)

topic_info = self.mannequin.get_topic_info()

topic_keywords = {

topic_id: self.mannequin.get_topic(topic_id)[:5]

for topic_id in set(matters) if topic_id != -1

}

return {

"assignments": matters,

"keywords": topic_keywords,

"distribution": topic_info

}

Be aware: Matter extraction requires a number of paperwork (at the very least 5-10) to seek out significant patterns. Single calls are analyzed utilizing the fitted mannequin.

Fig 5: Matter distribution bar chart displaying billing, transport, and technical assist classes

# Constructing an Interactive Dashboard with Streamlit

Uncooked knowledge is difficult to course of. We constructed a Streamlit dashboard (app.py) that lets enterprise customers discover outcomes. Streamlit turns Python scripts into internet functions with minimal code. Our dashboard gives:

- Add interface for audio information

- Actual-time processing with progress indicators

- Interactive visualizations utilizing Plotly

- Drill-down functionality to discover particular person calls

// Code Implementation for Dashboard Construction

import streamlit as st

def most important():

st.title("Customer Sentiment Analyzer")

uploaded_files = st.file_uploader(

"Upload Audio Files",

sort=["mp3", "wav"],

accept_multiple_files=True

)

if uploaded_files and st.button("Analyze"):

with st.spinner("Processing..."):

outcomes = pipeline.process_batch(uploaded_files)

# Show outcomes

col1, col2 = st.columns(2)

with col1:

st.plotly_chart(create_sentiment_gauge(outcomes))

with col2:

st.plotly_chart(create_emotion_radar(outcomes))

Streamlit’s caching @st.cache_resource ensures fashions load as soon as and persist throughout interactions, which is vital for a responsive person expertise.

Fig 7: Full dashboard with sidebar choices and a number of visualization tabs

// Key Options

- Add audio (or use pattern transcripts for testing)

- View transcript with sentiment highlights

- Emotion timeline (if name is lengthy sufficient)

- Matter visualization utilizing Plotly interactive charts

// Caching for Efficiency

Streamlit re-runs the script on each interplay. To keep away from reprocessing heavy fashions, we use @st.cache_resource:

@st.cache_resource

def load_models():

return CallProcessor()

processor = load_models()

// Actual-Time Processing

When a person uploads a file, we present a spinner whereas processing, then instantly show outcomes:

if uploaded_file:

with st.spinner("Transcribing and analyzing..."):

end result = processor.process_file(uploaded_file)

st.success("Done!")

st.write(end result["text"])

st.metric("Sentiment", end result["sentiment"]["label"])

# Reviewing Sensible Classes

Audio Processing: From Waveform to Textual content

Whisper’s magic is in its mel spectrogram conversion. Human listening to is logarithmic, that means we’re higher at recognizing low frequencies than excessive ones. The mel scale mimics this, so the mannequin “hears” extra like a human. The spectrogram is basically a 2D picture (time vs. frequency), which the Transformer encoder processes equally to how it might course of a picture patch. For this reason Whisper handles noisy audio properly; it sees the entire image.

// Transformer Outputs: Softmax vs. Sigmoid

- Softmax (sentiment): Forces possibilities to sum to 1. That is ideally suited for mutually unique courses, as a sentence normally is not each constructive and unfavourable.

- Sigmoid (feelings): Treats every class independently. A sentence might be joyful and stunned on the similar time. Sigmoid permits for this overlap.

Selecting the best activation is vital in your downside area.

// Speaking Insights with Visualization

dashboard does greater than present numbers; it tells a narrative. Plotly charts are interactive; customers can hover to see particulars, zoom into time ranges, and click on legends to toggle knowledge sequence. This transforms uncooked analytics into actionable insights.

// Working the Utility

To run the appliance, observe the steps from the start of this text. Check the sentiment and emotion evaluation with out audio information:

This runs pattern textual content via the pure language processing (NLP) fashions and shows ends in the terminal.

Analyze a single recording:

python most important.py --audio path/to/name.mp3

Batch course of a listing:

python most important.py --batch knowledge/audio/

For the total interactive expertise:

python most important.py --dashboard

Open in your browser.

Fig 8: Terminal output displaying profitable evaluation with sentiment scores

# Conclusion

We have now constructed an entire, offline-capable system that transcribes buyer calls, analyzes sentiment and feelings, and extracts recurring matters — all with open-source instruments. It is a production-ready basis for:

- Buyer assist groups figuring out ache factors

- Product managers gathering suggestions at scale

- High quality assurance monitoring agent efficiency

The very best half? All the things runs regionally, respecting person privateness and eliminating API prices.

The whole code is on the market on GitHub: An-AI-that-Analyze-customer-sentiment. Clone the repository, observe this native AI speech-to-text tutorial, and begin extracting insights out of your buyer calls at this time.

Shittu Olumide is a software program engineer and technical author captivated with leveraging cutting-edge applied sciences to craft compelling narratives, with a eager eye for element and a knack for simplifying advanced ideas. You can even discover Shittu on Twitter.